Internal combustion engines started way back in 1833. By 1860, functional internal combustion engines were being experimented with, and by 1872, the principles of intake, compression, combustion and exhaust were established. By 1903, the Wright brothers applied internal combustion engines in aircraft. By 1908, the U.S. Army ordered a “heavier than air flying machine” from the Wright brothers.

We call them “early adopters,” the people who wish to get a jump on whatever the newest technology has to offer. In a curious coincidence, adolescents and the military both have a penchant to be on the cutting edge of technology; both for bragging rights, and one for killing people with meager abilities to resist the new weapons. If it is your military that has the technological edge, you will be all for it. But being on the leading edge doesn’t assure that you will prevail, just ask the bumpkins who lost billions betting on Bitcoin.

While it is easy to sing the praises of what technology has brought us, it would be foolish not to recognize the faults of our technological gains. World War I was the first “modern war,” where the new technology met managers (called generals) only trained in Old World war techniques, and millions of soldiers died. Corporal Hitler applied technology in appalling ways never witnessed before, with an efficiency that shook the world to its core; millions suffered and perished at the hands of someone willing to apply technology in the most nefarious ways imaginable.

Naturally, those who create technological innovations pitch their ideas as benign, for all of the obvious reasons. The intention of firearms was to provide food and protection. But evil hearts found ways to achieve their heinous objectives. The internet was intended to exchange information, not provide a mouthpiece for dim-witted fanatics to disseminate their twisted “theories” and for sophisticated thieves to prey upon the naïve.

Moore’s Law says that the number of transistors in a dense integrated circuit (IC) doubles every two years. This doubling means that computer speed will keep doubling in only years, not decades or greater measures of time. It seems rather clear that technological advances occur too fast for humans to adapt to them. In the case of the United States, the legislatures were too far behind, and by the time they were looking at regulating technology, the technology firms had billions of dollars in their bank accounts and had paid off all of the representatives of the people, all of those politicians they carry around in their pockets, like so many nickels and dimes.

The World Economic Forum of 2020 had “17 ways technology could change the world by 2025” (they didn’t capitalize) where the technology leaders unapologetically announced some of the changes to arrive by the year 2025, so let’s look at few of them:

“One major application of this new kind of computer will be the simulation of complex chemical reactions, a powerful tool that opens up new avenues in drug development.” They go on to say that the treatment of cancer will drastically change for the better.

I would love to see this, but, my instincts tell me no, and especially “no” unless there is money involved. Big Pharma has too much invested to let this happen. Years of research and millions of dollars invested aren’t going down the drain because some AI computer came up with a solution.

Then there’s: “By 2025, healthcare systems will adopt more preventative health approaches based on the developing science behind the health benefits of plant-rich, nutrient-dense diets.” I hardly think so. Unhealthy food just tastes too good. Might I add, technology giant and nuclear-armed India has 15% of its population (as of 2021) malnourished. Computers will not solve that problem, in India or anywhere else.

How about: “Technology drives data, data catalyzes knowledge, and knowledge enables empowerment. In tomorrow’s world, cancer will be managed like any chronic health condition —we will be able to precisely identify what we may be facing and be empowered to overcome it.” This is wishful thinking, certainly not by 2025.

Computing will become a culture in and of itself, as described: “By 2025, the lines separating culture, information technology and health will be blurred. Engineering biology, machine learning and the sharing economy will establish a framework for decentralizing the healthcare continuum, moving it from institutions to the individual.” Way too much has been invested in institutions for this to happen, at least not in the United States. The fiefdoms are entrenched, and they will only capitulate as a last resort.

Worried about where finance is going? No worries: “Improvements in AI will finally put access to wealth creation within reach of the masses. Financial advisors, who are knowledge workers, have been the mainstay of wealth management: using customized strategies to grow a small nest egg into a larger one. Since knowledge workers are expensive, access to wealth management has often meant you already need to be wealthy to preserve and grow your wealth. As a result, historically, wealth management has been out of reach of those who needed it most.

Artificial intelligence is improving at such a speed that the strategies employed by these financial advisors will be accessible via technology, and therefore affordable for the masses.” The fact is that that those who desperately need wealth management education haven’t the slightest inclination to concern themselves with wealth management advisors. Great idea, but precious few of the Great Unwashed will go along with this plan. You can educate the spendthrift lower classes all you wish; all they want to know is how much money they can get their hands on to spend right now. Saving for their future while they deny themselves maximum amusement in the present is quite repugnant to them. In their mentality, money was meant to be enjoyed, not consigned to some bank or broker, no matter how much it will help their future. The instant gratification mentality has reached full saturation. Nest eggs are to be prepared and served immediately, while still fresh.

Worried about internet privacy? No more: “Five years from now, privacy and data-centric security will have reached commodity status – and the ability for consumers to protect and control sensitive data assets will be viewed as the rule rather than the exception.” If this happens, Google and Facebook might as well fold up their tents and go home. By “tents” I mean close their lavish offices decorated with free Starbucks and massage therapists on call twenty-four hours a day. Honestly, it is as if this executive has not the slightest inkling how Google and Facebook reap their revenue.

From Pew Research Center: “Fifty years after the first computer network was connected, most experts say digital life will mostly change humans’ existence for the better over the next 50 years. However, they warn this will happen only if people embrace reforms allowing better cooperation, security, basic rights and economic fairness.” Embrace reforms? No. Sorry. Not going happen.

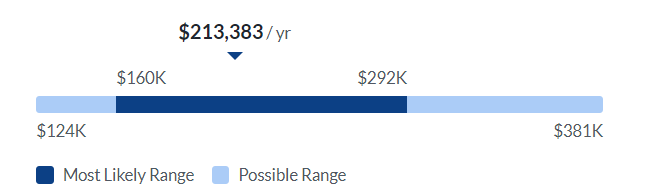

According to Glassdoor.com (2023) the average pay of Technology Executive in the U.S. is $213,332 per year. Pretty sure there are other perks as well. Of course, they have to make their efforts look benevolent. None of them want to hear that their work is supporting thievery or facilitating evil and illegal behavior. Throughout history, the originators of technology sought to make life better for their fellow humans, and on very special and utterly rare occasions, it turns out that way.

Sources:

Future shocks: 17 technology predictions for 2025 | World Economic

Forum (weforum.org)

Experts Optimistic About the Next 50 Years of Digital Life | Pew

Research Center